AI Built for Security Teams, Not Generic Use

More than a dozen purpose-built AI capabilities woven into every module, each calibrated for compliance accuracy, not general conversation. Grounded in authoritative regulatory data and your organization’s actual posture.

RAG-Powered Compliance Chat

7 dedicated framework assistants, each grounded in its regulatory corpus plus your organization’s data. Ask in plain English, get answers with clickable citations back to the actual rule text.

Semantic Search

Search by meaning across risks, meetings, policies, findings, and evidence. Ask questions in natural language, get relevant results regardless of wording.

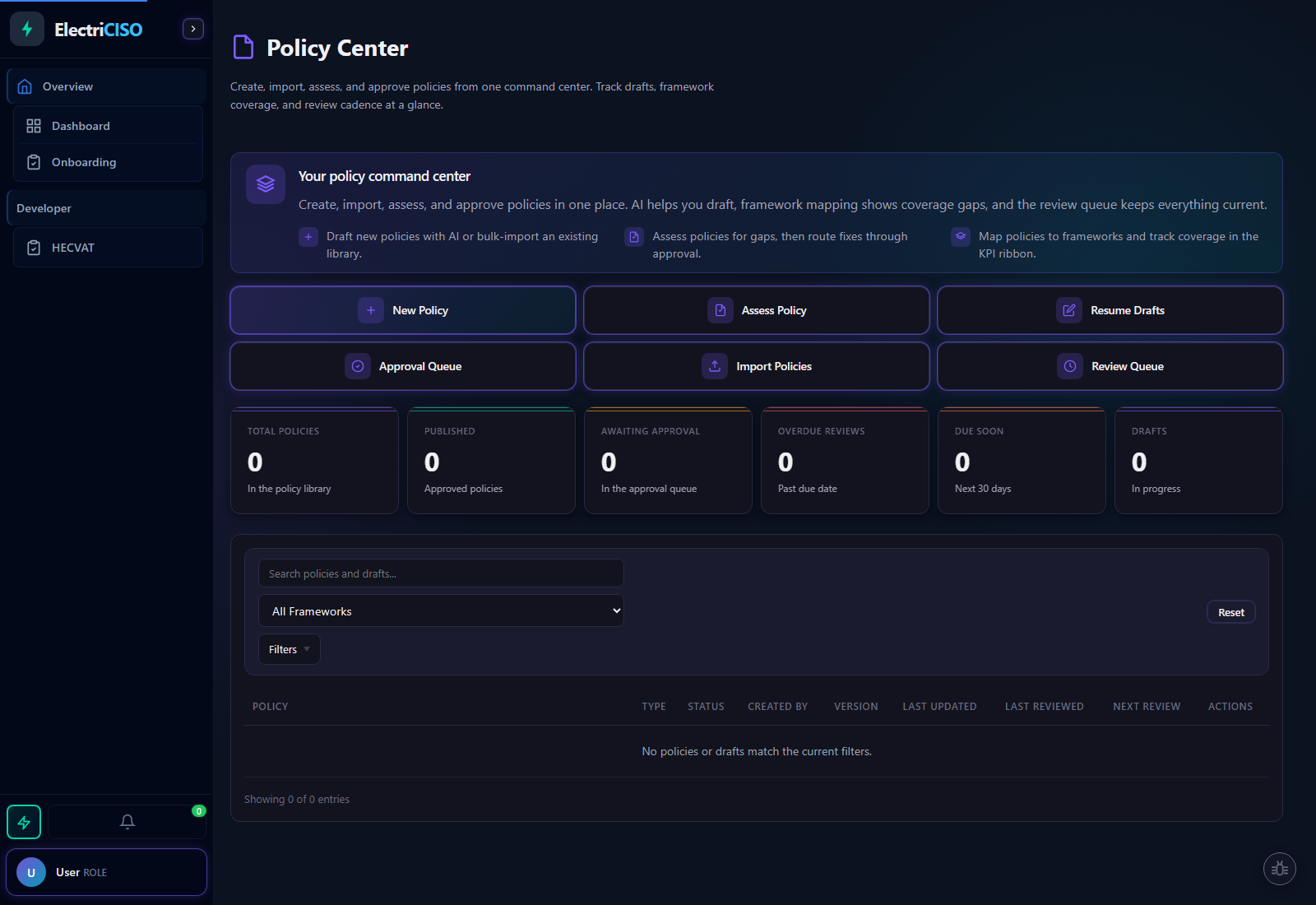

Policy Generation

Transform requirements into structured, professional security policies with framework-aligned titles, outlines, and section-by-section drafting.

Risk Enrichment

AI identifies duplicate risks via semantic matching before they pollute your register. Three-signal detection, vector similarity, trigram title matching, keyword overlap, with confidence ratings (High/Medium/Low) for every flag.

Threat Intelligence Triage

A multi-signal algorithm scores every incoming threat for relevance and severity. Raw CVE bulletins and breach notifications are transformed into structured executive briefings with action steps and ready-to-import risk entries.

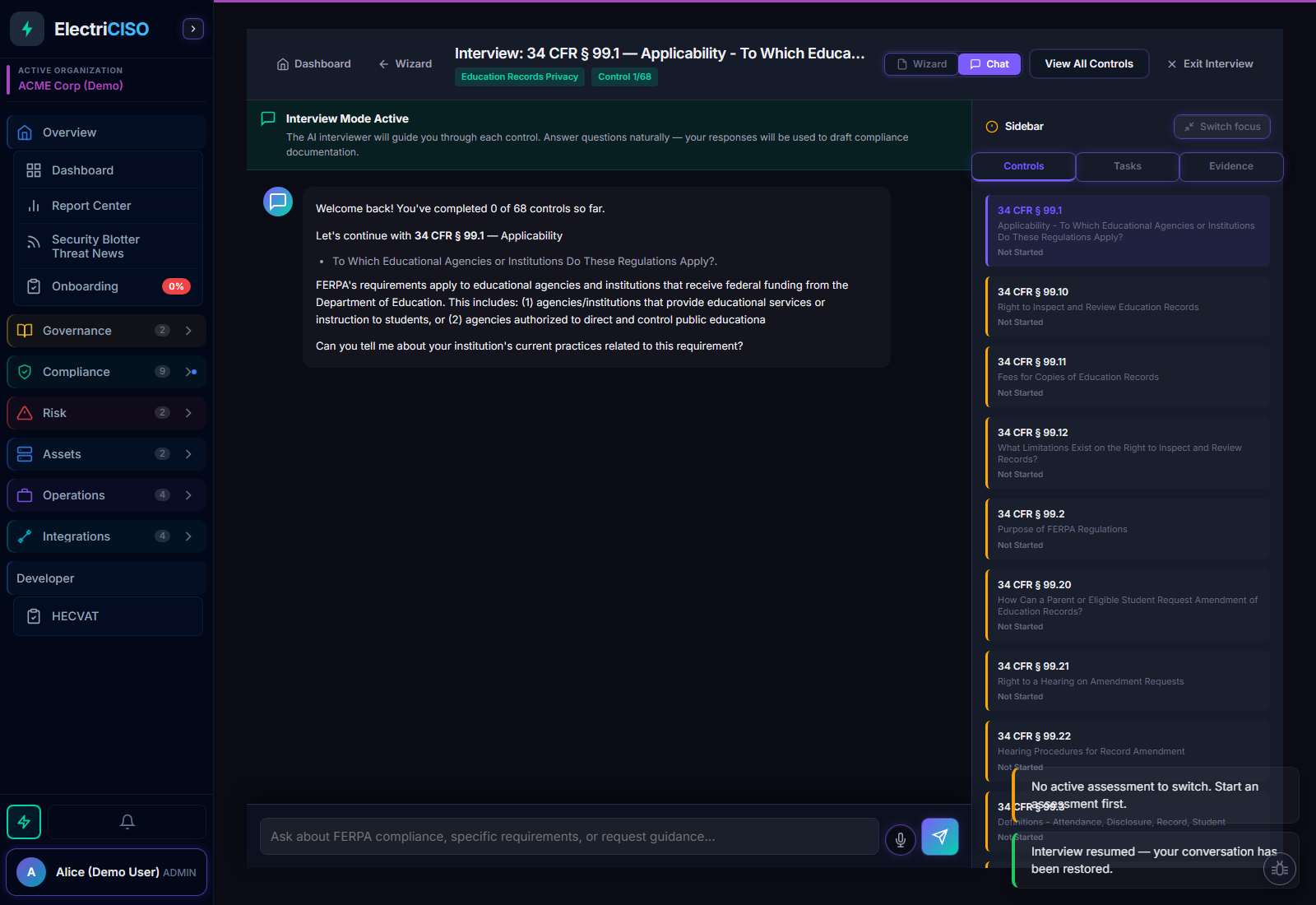

AI Interview Mode

A professional interviewer that explains the requirement, asks one question at a time, adapts based on your answers, and drafts a formal compliance response from your specific inputs, not generic boilerplate.

Incident Analysis

Real-time Perplexity Sonar search runs during active investigations, pulling current threat actor techniques, patch details, and CVE context directly into the incident chat, without leaving the platform.

Assessment Drafts

AI-generated formal assessment responses incorporating your answers, org context, gap analysis, and evidence suggestions.

Voice Input / Ramble Mode

Browser-based speech-to-text for all compliance chat interfaces. Speak your assessment answers naturally, no audio is ever sent to the server. Org-level toggle for privacy compliance.

CrossWalker Mapping Intelligence

CrossWalker evaluates overlap across 42 directed framework pairs using curated source material and human-review gates. The point is traceable reuse with provenance, not speculative mapping or blind automation.

AI Task Decomposition

Any task, whether generated from a compliance gap, an incident action item, or a policy review, can be broken into 4-8 specific, actionable subtasks with one click. Review AI suggestions and create them all in a single action.

Attestation Template Generation

Describe a policy or procedure and AI drafts the full attestation text, description, scope, and acknowledgment language, ready for review and team sign-off. No blank-screen drafting.